The central theme that emerged throughout the day was:

How can architectures be standardized and automated to make them resilient, scalable, and governable at scale?

Numerous presentations addressed this global issue from different angles: large-scale Kubernetes, the industrialization of CI/CD and the SRE approach, internal platforms for developers, technical redesign of applications, and resilience and security "by design." For each theme, we will highlight the solutions presented, the lessons learned, the recommended best practices, and the limitations or challenges identified.

Deploy standardized Kubernetes clusters at scale

The day kicked off with a presentation by Rachid Zarouali (a well-known Cloud Native expert in the community) on his experience with large-scale Kubernetes deployment. His opening statement was: "Today, deploying a Kubernetes cluster is easy, with many solutions available... we are spoiled for choice. What should we do when we want to deploy and manage a large number of clusters? Which solutions should we use?"

Indeed, while creating a K8s cluster has become trivial (whether via a managed public cloud or on your own servers), managing dozens or hundreds of clusters in a consistent and efficient manner poses other challenges. Rachid shared several projects undertaken to meet this challenge, comparing the possible tools and approaches, with their respective advantages and disadvantages. The stated objective was clear: to show that with the right strategy, it is "no more complicated"to administer two clusters than it is to administer a hundred!

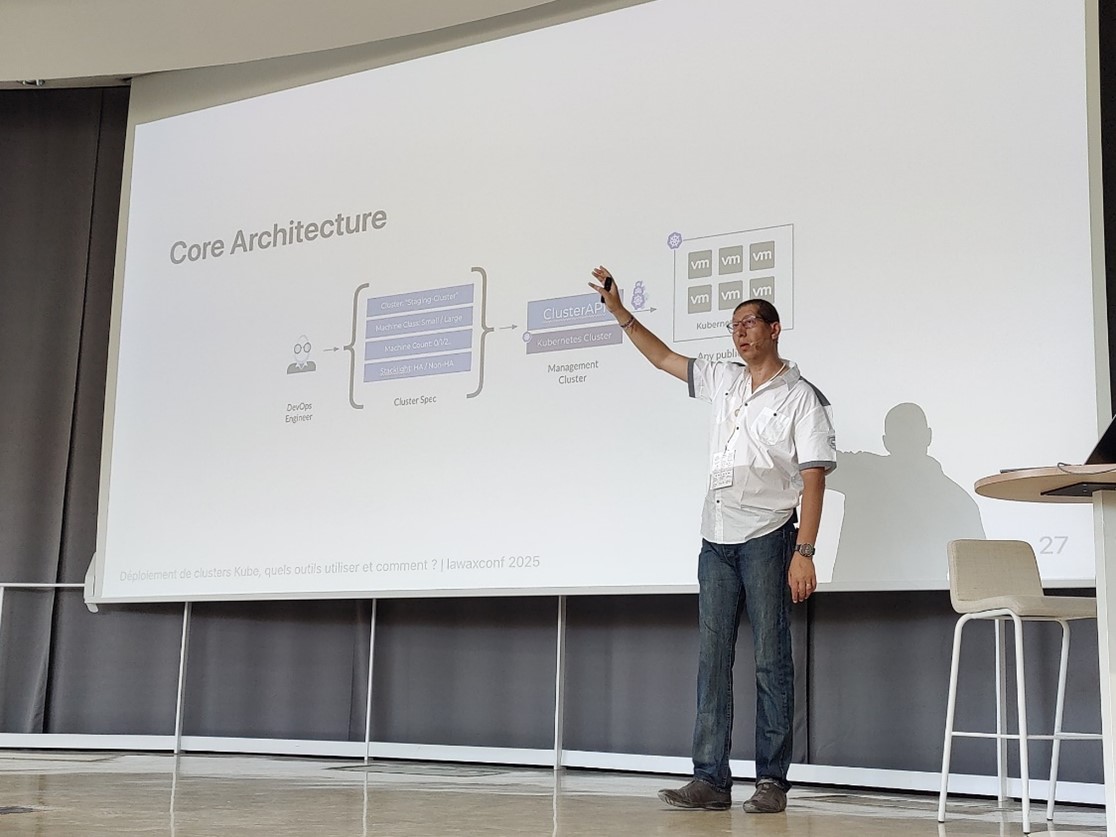

In concrete terms, one solution stands out in particular for standardizing the lifecycle of multiple clusters: ClusterAPI (CAPI). This open-source project, which is part of the Kubernetes ecosystem, allows you to declaratively define "Cluster Specifications" (specs) that are submitted to a Cluster API provider responsible for creating or modifying clusters based on these specs. It's Infrastructure as Code applied to clusters, in a way. Rachid illustrated the basic architecture of this approach: a DevOps engineer formulates the specs for the desired cluster (number and size of nodes, high-availability requirements, etc.), then via the ClusterAPI API, a management cluster will instantiate the target cluster on the chosen infrastructure (public cloud, on-premise VMs, etc.).

Diagram taken from Rachid Zarouali's presentation, illustrating the basic architecture for automated management of large-scale Kubernetes clusters via ClusterAPI. An engineer defines the desired "Cluster Spec," and ClusterAPI takes care of creating a target Kubernetes cluster, whether on virtual machines or any other provider.

This approach allows for the standardization of cluster creation and configuration (each new cluster is created based on a common template), while delegating repetitive tasks to Cloud Native tools. By combining ClusterAPI with GitOps pipelines (e.g., ArgoCD) to keep specs up to date, you get a true cluster factory that can be controlled by Git versioning.

Key takeaways from this talk: managing a large number of Kubernetes clusters becomes viable provided that you adopt a high level of automation and standard models. The benefits include increased consistency (all clusters are built according to the same pattern), reduced manual errors, and the ability to administer a fleet of clusters as a single entity. However, automation on this scale requires a significant initial investment: choosing and implementing tools (ClusterAPI or other), training teams in these new workflows, and setting up centralized monitoring to anticipate problems (because with 100 clusters, even a few isolated bugs can eventually occur). The speaker emphasized the importance of not trying to anticipate everything in advance, but rather moving forward "step by step," iterating in order to adjust tools and processes as you go, rather than trying to predict everything theoretically.

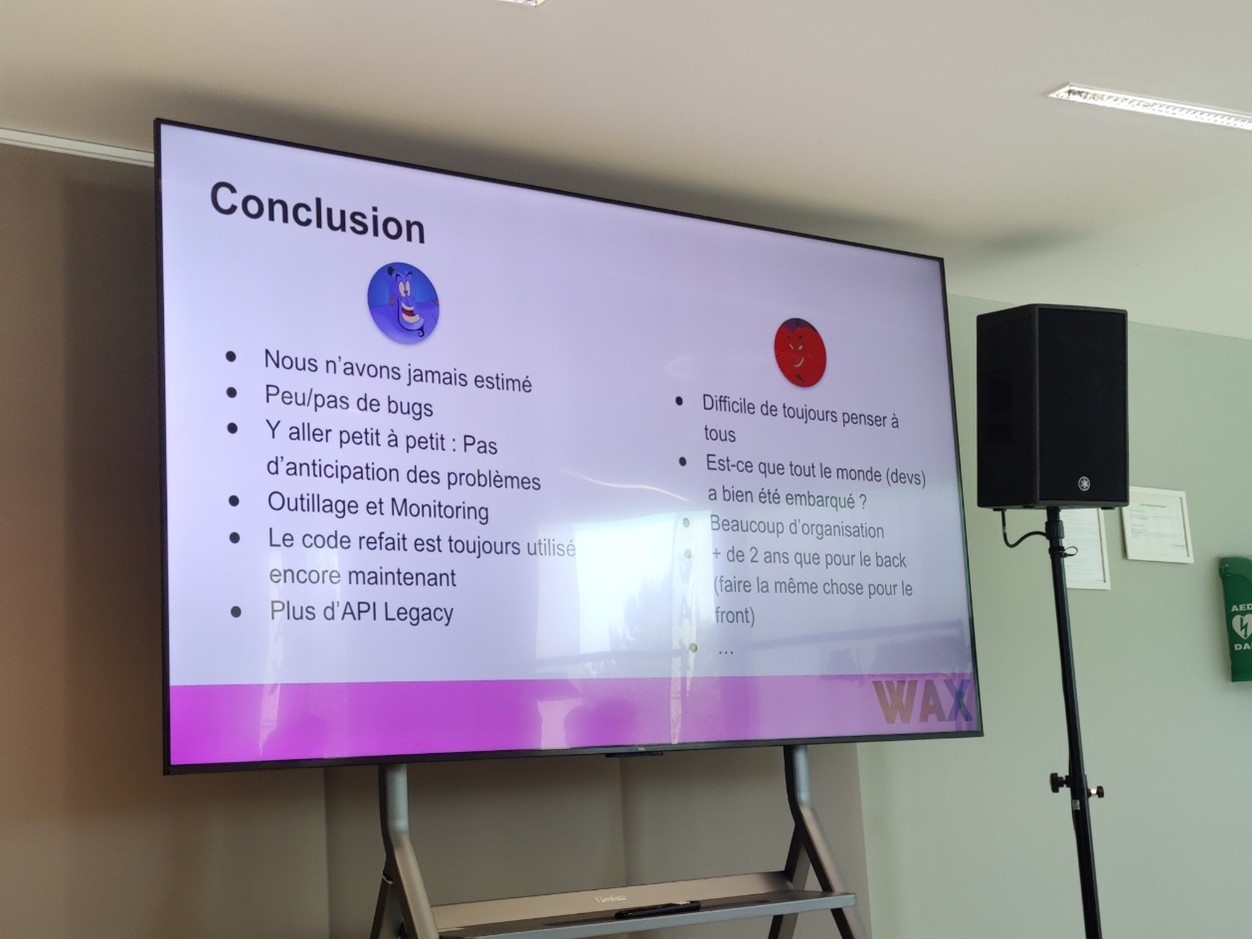

Excerpt from the concluding slide of a feedback report on the redesign of a platform (see section below). It shows, by analogy, best practices that can be applied to any technical transformation project: move forward in small increments without trying to anticipate everything, equip and monitor to detect problems as they arise, and gradually eliminate "legacy" elements once the new system is in place.

In short, thanks to tools such as ClusterAPI, it is possible to industrialize the deployment of Kubernetes to support the scalability of Cloud Native platforms. This provides additional resilience (clusters rebuilt on the fly in the event of a failure) and better governance (centralized control of Kubernetes versions, basic configurations, etc.), valuable assets for a CIO who has to manage rapid service growth.

Industrialization of CI/CD and SRE approach at scale

Let's now move on to the industrialization of CI/CD pipelines and the adoption of SRE (Site Reliability Engineering) practices on a large scale.

How can hundreds of application projects be consolidated around unified continuous integration/continuous deployment processes, while improving overall reliability?

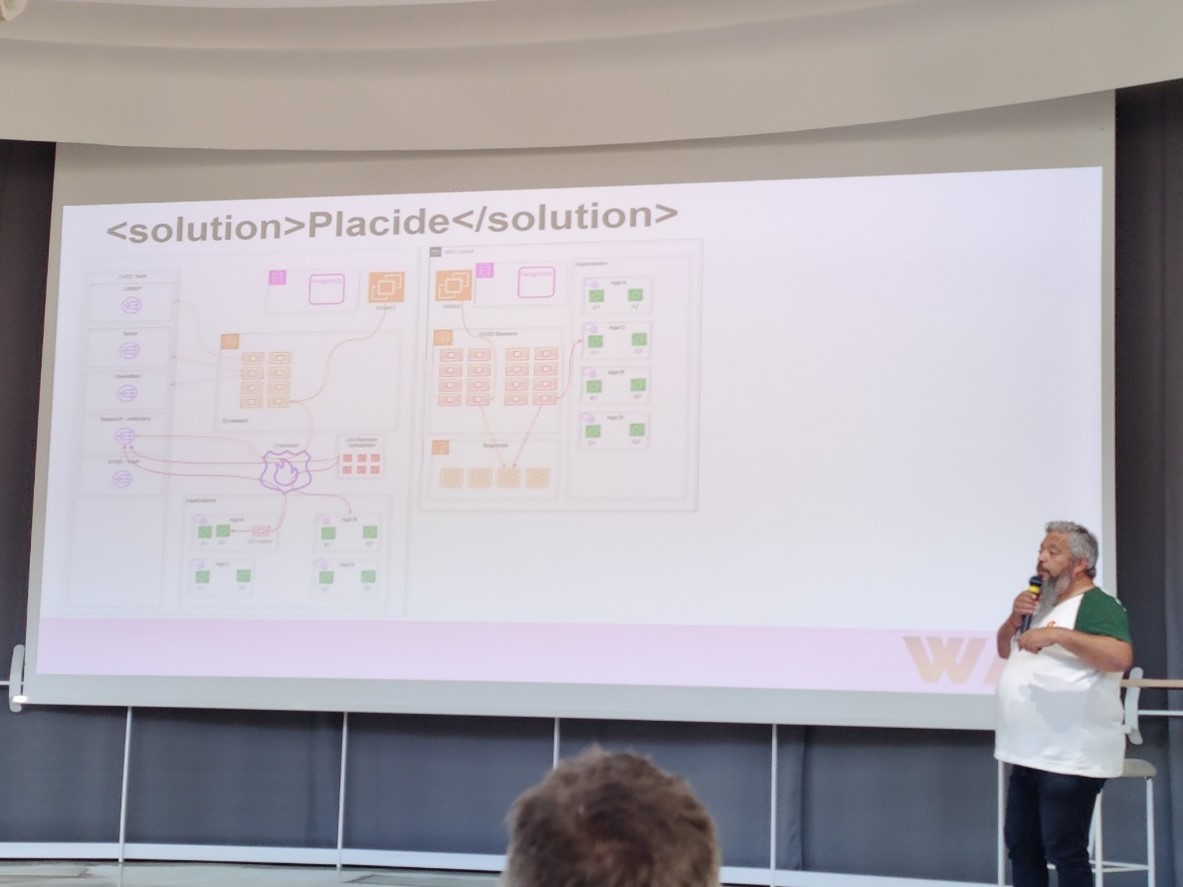

A striking example of this is a major French energy operator that developed its own software forge called "Placide." Presented by Sébastien Longo, this case study describes how, in an industrial group providing essential services, they promoted the DevOps approach and developed an SRE strategy across the entire company. Placide aims to "bring together more than 400 projects on a standardized CI/CD" in accordance with SRE principles. The initiative required defining a common vision, implementing it via a tool-based platform, and, above all, meeting the challenge of onboarding hundreds of teams onto this new tool-based chain. Such standardization brings great benefits (pooling of tools, common best practices, consolidated reliability indicators) but also requires internal change management support.

The presenter outlines the architectural requirements: GitLab base, separation of CI and CD runners, pipeline templating, integration of factory services (secrets, registry, code quality/security, etc.). The Placide platform, as a CI/CD factory, brings together pipeline templates, segmented executors, tooled integrations, and multi-project views to manage over 400 applications.

From a technical standpoint, Placide is similar to an internal CI/CD chain "as a service": code repository, CI tools, deployment orchestration, artifact management, monitoring, etc.; all provided to development teams via a set of common tools and rules. While the details of the implementation have not been fully disclosed (as is typical with in-house solutions), it is clear that the focus is on Everything as Code, integrating security into the pipeline (vulnerability testing, quality rules, etc.), and collecting SRE indicators (errors, availability, performance) for each service. Lesson learned: to bring 400+ projects onto a uniform platform, technology alone is not enough. You need a shared vision (in this case, "DevOps everywhere, SRE everywhere"), sponsorship to get the solution adopted, and a team dedicated to the tools that treats its "internal customers" (dev teams) with the same attention that a SaaS provider would give to its external customers. There were many challenges, including managing the initial heterogeneity of practices, migrating existing projects without blocking deliveries, and training developers on new tools. But the results are starting to pay off: a consistent technical base (the Placide forge) that facilitates governance (for example, compliance or security audits are simplified when everything goes through a central pipeline) and improves resilience because once SRE best practices are integrated everywhere, the organization is better able to handle incidents (earlier detection, safer deployments, etc.).

At the same time, another session entitled "Go Go Go (for ArgoCD, Golang, Golive)" illustrated an open-source approach to industrializing large-scale environment deployments via GitOps. Thomas Boullier, François Tritz, and Damien Gérard presented how they leverage the power of ArgoCD (a GitOps tool), Kubernetes Operators in Go, and Tekton CI to automate and maintain development environments on OpenShift. The focus is on an on-premise "OpenShift-centric" approach, with the design of a custom Operator in Golang to simplify the repeated deployment of dev/test environments. In practice, this means that the creation of a new environment (e.g., an isolated namespace with the entire necessary stack) is triggered via Git (declarative definition), handled by ArgoCD, and specific tasks (creation of OpenShift resources, configurations) are managed by a custom-developed operator controller. This automated chain allows environments to be replicated consistently and reliably, and to be smoothly "Go Live" in production.

Best practice to remember: using GitOps coupled with custom operators is a powerful lever for scaling operations without overloading teams; we encode the know-how (environment pattern) in the operator once and for all, then each new deployment automatically follows this model. The trade-off is technical complexity: you need to master the development of Kubernetes operators in Go and the maintenance of an advanced CI/CD chain (Tekton, ArgoCD). Nevertheless, for those who have the means, this approach offers great flexibility and drastically reduces human error in the deployment of environments, a crucial point for reliability (SRE).

Finally, one common aspect highlighted by both the Placide experience and this GitOps session is the importance of integrating security as early as possible in the pipeline. Having a WAF in production or container vulnerability scans is good, but detecting vulnerabilities upstream in the CI/CD chain is better. In this regard, a tool such as Dependency-Track (presented by Elisa Degobert) can help prioritize fixes for vulnerable dependencies based on their exploitable criticality. This is a pragmatic approach to avoid being overwhelmed by dozens of security alerts and focus efforts where they are needed.

In summary, the industrialization of CI/CD requires the implementation of uniform, integrated, and "secure by design" pipelines. Whether through a custom internal platform such as Placide or through the assembly of open-source tools (ArgoCD, Tekton, operators, etc.), the goal is to achieve reliable, reproducible, and scalable deployments. For CIOs, this means fewer operational risks (thanks to automated controls) and greater visibility into production. For developers, it provides a toolkit that speeds up deliveries while ensuring consistent quality.

Internal platforms and consistent developer experience

Another recurring theme was Developer eXperience (DevX) and internal developer platforms. In many organizations, standardizing architectures is not just about production: developers must also be equipped to work in consistent, efficient, and secure environments, regardless of the size of the team or codebase. Engin Diri and Alexandre Nedelec led an in-depth session entitled "Internal Developer Platforms: choosing the right path for your organization." They began by reminding attendees that, beyond the buzzword, IDPs have become an essential part of modern DevOps practices, aimed at streamlining developer workflows and accelerating deliveries. However, faced with a plethora of possible solutions, from open source (Backstage) to SaaS offerings (Port, Pulumi Cloud, etc.), companies must make complex decisions when developing their IDP strategy.

Their presentation provided a practical evaluation framework: what are the essential components of a modern dev platform? Should you build your platform in-house or rely on a commercial product? What are the implementation patterns that work, and the anti-patterns to avoid? These are all questions that CIOs are asking themselves today, as the promise is attractive (a "5-star" developer experience that improves productivity and reduces time-to-market) but the path is fraught with pitfalls (cost of developing an in-house platform, integration with existing systems, risk of lock-in with a SaaS solution, etc.).

A successful IDP must provide "essential functionality for developers" (ready-to-use dev environments, self-service deployment, service catalogs, security tools, etc.) while aligning with business objectives. For example, a bank will require strong integrated governance and compliance capabilities, whereas a startup will primarily seek speed of deployment. There is no one-size-fits-all solution, which is why it is important to carefully evaluate the options. Engin and Alexandre compared open-source solutions such as Backstage (highly flexible and extensible, but requiring investment to operate) and SaaS solutions such as Port or Pulumi Cloud (ready to use but less customizable). They also emphasized the Build vs. Buy question: developing your own platform can give you a tailor-made advantage, but it is a long-term project that requires a dedicated Platform Team and is only feasible if the size of the organization justifies it. Conversely, an existing solution can be deployed more quickly, at the cost of possible compromises in functionality or independence.

Ultimately, choosing the "right" IDP depends on the company's DevOps maturity, its constraints (security, regulations, etc.), and its technology ecosystem (hybrid cloud, multi-cloud, on-premises, etc.). In any case, IDPs are becoming standard in large IT departments: the gains in efficiency and reliability they provide have been widely demonstrated (particularly among web giants who are pioneers in Platform Engineering), and the tools are becoming more widely available.

To illustrate the implementation of a dev platform in concrete terms, another talk took us into the field of cloud development environments. "Develop on a toaster with Coder," the amusing title of the presentation by Melissa Pinon and Paul Vulliemin, starts with a problem experienced by many companies: "Do your developers complain that their PCs are like toasters? That environments are not consistent, and that workstations take a long time to become available?" The proposed solution: Cloud Development Environments (CDE), i.e., remote, standardized development environments that are accessible on demand. In this case, they implemented the open-source Coder platform to orchestrate dev environments hosted on Kubernetes, offering each developer an IDE accessible via a browser but running on a cloud container. This allows you to work from any machine, even a low-powered one (hence the "toaster" reference—a sluggish PC is enough to display the IDE, with most of the work being done on the server side).. The talk presented how to create a development environment in a Kubernetes cluster using Terraform, then use it from a developer's perspective. Integration with popular IDEs (JetBrains, VS Code) was highlighted, as was the fact that Coder is open source and free, which lowers the barrier to entry for experimentation.

Observed benefits: consistent environments for all developers (no more "it works on my machine"), a few minutes instead of several days to install/configure a new workstation, and the ability for developers to switch between devices without interrupting their workflow. In terms of governance, this also allows the IT department to control the catalog of images used (runtime versions, pre-installed tools, etc.) and to enhance security (the source code remains on the centralized infrastructure, not on laptops). But... this model requires a powerful and reliable cloud infrastructure (in this case, a K8s cluster that can potentially run dozens of environments in parallel), as well as an adaptation of usage (loss of offline mode, need for good connectivity). Nevertheless, for distributed or fast-growing organizations, CDEs are a valuable tool for standardizing the developer workstation and gaining agility.

In short, whether it's a comprehensive Internal Developer Platform or a targeted service such as remote dev environments, the idea is to provide teams with a unified toolkit that automates repetitive tasks and allows them to focus on business value. For a decision-maker, investing in such internal platforms means investing in long-term productivity and quality. Happy developers (with good tools) produce more reliable code; standardized processes reduce human error and facilitate the onboarding of newcomers. The trade-off is that resources must be allocated to platform engineering (a tooling team, training); an effort that can be modulated by gradually adopting ready-to-use external solutions according to priorities.

Successfully completing a technical overhaul without (too much) pain

Resilient and scalable architecture often means application redesign at some point. Mickael Wegerich's feedback entitled "Technical redesign: the magic formula to stop suffering" demonstrates this. Mickael shared the story of the in-depth redesign of a critical application, carried out with his team, and the lessons they learned to break the vicious cycle of repeated redesigns. He began by setting the scene: identifying when and how to justify a redesign to stakeholders (decision-makers, business users); which is often a delicate point because redesigns are costly and do not deliver any visible new features. In his case, they were able to convince stakeholders of the need for the project by demonstrating that the existing architecture had reached its limits (too much technical debt, inability to evolve) and that, in the long run, doing nothing would cost more than redesigning.

The "magic formula" mentioned in the title is not a miracle tool, but a combination of good approaches: organization, of course (having a dedicated team, an iterative plan, management support), but also mastery of the right tools and technical best practices. During the redesign, his team adopted an agile philosophy: dividing the project into successive deliverables in production, in order to deliver value continuously instead of a risky big bang at the end of the project. For example, they were able to deploy the new API in parallel while keeping the old one running, then gradually switch consumers to the new one. This strangling pattern avoided service interruption and allowed the new architecture to be tested gradually under real conditions. "Taking it step by step" was one of the points emphasized in the conclusion, as was the importance of monitoring throughout the process to detect regressions or performance issues as they arose.

Let's talk about the results: Mickael says that they "never really estimated" the entire project at the beginning (which is difficult with a complex overhaul), but by moving forward incrementally, they managed to maintain a sustainable pace and deliver a brand-new backend in just over two years. During this period, notably, they had very few bugs in production on the new code, thanks to testing and careful monitoring of each deployed component. The legacy code was gradually phased out and, at the time of the talk, "the rewritten code is still in use" in production, proving that the overhaul achieved its goal of sustainability. Of course, it wasn't all easy: "It's difficult to always think of everything," he notes, conceding that it is challenging to get absolutely all developers and stakeholders on board with the same level of commitment.

Furthermore, if the backend redesign has been successful, the frontend must now undergo the same process, a reminder that transformation is never complete.

Key lessons: to successfully overhaul a system, you need (1) intelligent segmentation and an incremental approach, (2) investment in tools (automated testing, reliable CI/CD, real-time monitoring) to secure each stage, (3) involve the dev teams so that they buy into the change (train them in new technologies, show them the intermediate successes), and (4) accept that there is no such thing as a perfect overhaul. We have to make compromises and strive for continuous improvement rather than perfection. Mickael's presentation ends on an optimistic note: by applying these recipes, we can "build a solid and sustainable technical foundation that is capable of evolving without calling everything into question." In other words, prevent the next overhaul by designing modular, scalable architectures now and institutionalizing best practices that avoid the accumulation of technical debt (code review, regular cleanup, documentation, etc.).

For CIOs/CTOs, this feedback offers valuable insight: it is possible to tackle an ambitious overhaul without drama, provided that the right conditions are in place (time, tools, talent) and that the project is value-driven. Rather than waiting for a system to become unmanageable and then throwing everything away to start from scratch, it is better to anticipate and invest regularly in the ongoing maintenance of the application. This can take the form of partial or continuous redesigns, which is in line with the idea of Continuous Modernization advocated today. In short, building the long-term resilience of an IS requires judicious architectural choices and the ability to periodically question them without breaking everything: a delicate balance, but one that can be achieved with method.

Cyber resilience and security "by design" at scale

Resilience is not just a matter of software architecture or infrastructure; it is also the ability to withstand shocks in terms of cybersecurity. Several feedback reports have addressed security on a large scale, whether it be dealing with an attack or managing day-to-day security in multiple projects.

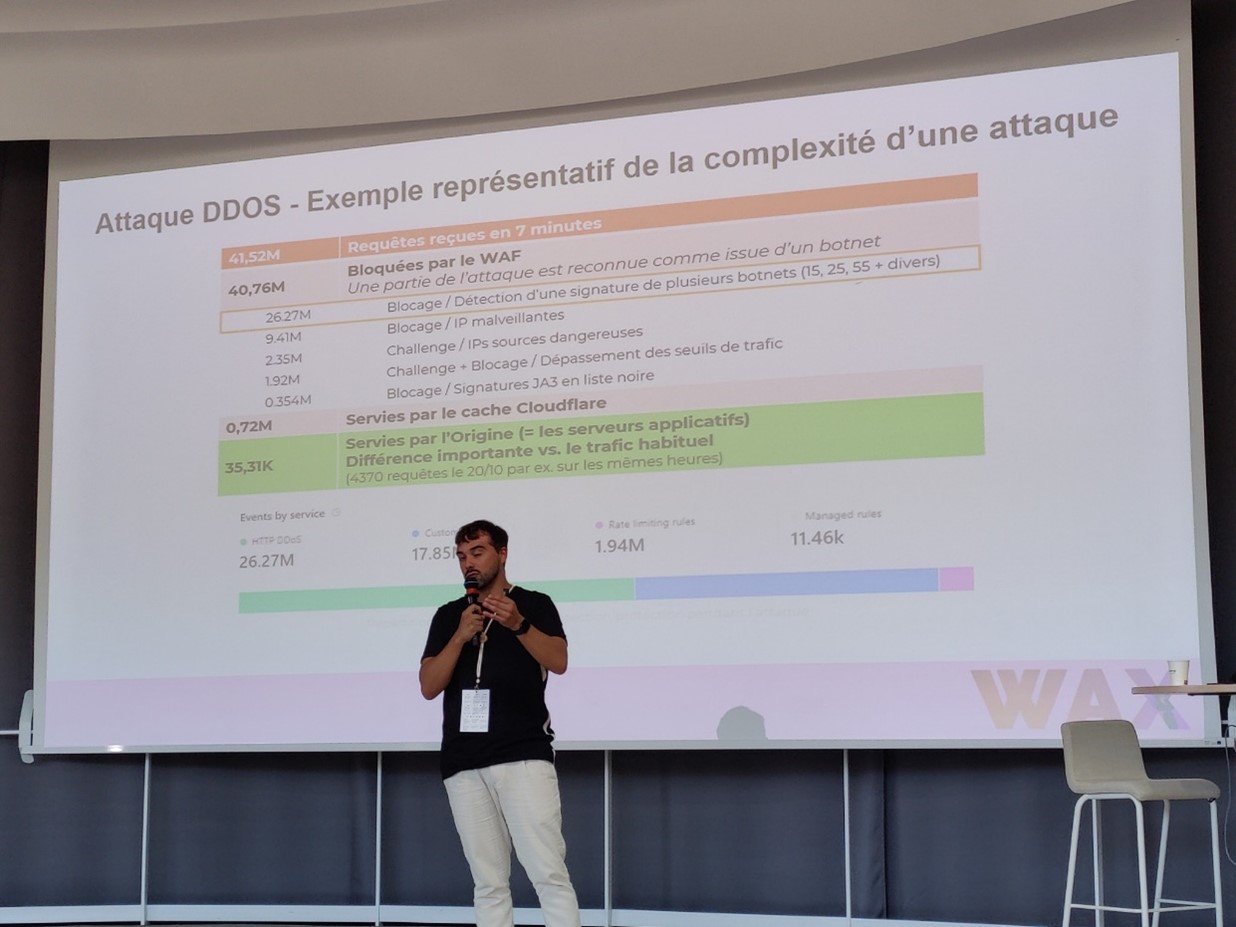

The talk by Jean-Pierre Gil and Cyril Bouissou particularly caught the audience's attention by revisiting a very real crisis scenario: "From emergency to resilience: securing an e-commerce site after a DDoS attack." They recounted how one of their clients, an e-commerce site, was suddenly paralyzed by a massive DDoS attack that shut down its online business. Total chaos, no more orders possible—every CISO's nightmare. They then helped this client restore service urgently by deploying a cloud-based anti-DDoS solution coupled with a high-performance WAF, capable of analyzing traffic in real time and automatically blocking threats. Some of the technologies used incorporate algorithms to distinguish legitimate traffic from attacks (for example, via specific botnet fingerprints). An enlightening slide showed the composition of traffic during the attack: more than 40 million malicious requests received in 7 minutes, almost all of which were blocked by the WAF, 0.7 million served by the CDN cache (Cloudflare), and only ~35,000 reached the origin servers (application servers), compared to the usual few thousand, proving the effectiveness of the shield put in place.

Excerpt from the presentation by Jean-Pierre Gil and Cyril Bouissou quantifying the impact of a large-scale DDoS attack suffered by a client. In 7 minutes, more than 41 million requests were sent from several botnets. Thanks to the rapid implementation of a cloud WAF (coupled with a CDN), almost all of the hostile traffic was automatically blocked (orange and gray areas), and only a tiny fraction reached the application servers (green).

For architecture to be resilient, it must incorporate deep defenses capable of rapid deployment right from the design stage. In this case, the presence of a CDN/WAF made the difference between a prolonged outage and a limited interruption. The presenters emphasized that, beyond the technical response, it was also necessary to manage the crisis in terms of communication, and that a clear business continuity plan is essential (who to alert, what decisions to make, and how quickly). In hindsight, the customer learned from this incident the importance of regularly testing its defense mechanisms and considering "chaos engineering" (simulating attacks to test the system and the team).

Security at scale also means being able to manage the multitude of potential vulnerabilities in dozens of software programs and their dependencies. On this front, the Dependency-Track solution presented earlier offers valuable assistance. Elisa Degobert recalled that "there are two types of companies: those that have been hacked and those that don't know they have been hacked yet," a famous quote from John T. Chambers. The idea is that prevention is better than cure: implement a DevSecOps approach where every known software dependency is tracked, and every CVE is assessed and addressed. But in practice, with hundreds of libraries and an avalanche of vulnerabilities published every month, "it's hard to know where to start." Should you prioritize fixing a critical vulnerability that is theoretical but difficult to exploit, or a less severe vulnerability that is wide open? This is precisely the dilemma that Dependency-Track attempts to address. By aggregating vulnerability information and cross-referencing it with the application's operating context, the tool helps prioritize efforts where they will have the greatest impact. For a CISO, this type of platform is an ally in governing application security at scale, moving from ad hoc alert management to a systematic and measured approach that is integrated into the CI/CD pipeline.

We should also mention Paul Pinault's feedback, which, although focused on development in the blockchain environment, highlighted the security pressure in these ecosystems. In two years of developing a DeFi solution, his team had to manage a database exceeding 10 TB, a flow of 100,000 events per minute, and deal with regular attacks motivated by financial gain (even for a few cents). This forced them to write more robust code and implement proactive monitoring to detect and counter ongoing attacks. The moral of the story: when the attack surface expands (lots of data, traffic, services), you need to equip your infrastructure accordingly (real-time monitoring, intelligent alerting, etc.) and adopt a culture of security shared by developers. This brings us back to the concept of DevSecOps, which is becoming essential on a large scale.

Finally, beyond technical tools, WAX CONF devoted an entire session to the organizational dimension of tech. Renaud Decondé, in a deliberately provocative talk (" Are you able to organize your tech teams properly?" ), reminded us that there is no miracle solution when it comes to organization. No matter how much we apply trendy agile methods, copy the "Spotify" model, or stack frameworks (SAFe, LeSS, etc.), "in the end, it's never simple," because the organization must first and foremost respond to constraints that are specific to each context. His experience, gained from working in large groups, digital services companies, and scale-ups, suggests a few principles: first, accept that there is no such thing as a "perfect organization" and that "the organization must also change" as soon as constraints change (growth, new products, new regulations, etc.). Next, stop believing in the ideal pyramid: on the contrary, the architecture and organization should be distributed as much as possible, allowing the "components" (teams) to decide and self-organize as soon as possible, which is "still the best approach." In short, favor autonomy and local adaptation rather than imposing a rigid top-down organizational chart. The message to CIOs/CTOs is therefore to focus on establishing a framework (product vision, architectural principles, basic roles) while maintaining sufficient flexibility for the organization to calibrate itself. This notion of a modular team is similar to the analogy with modular architectures: just as a governable architecture is composed of well-defined independent building blocks, an effective organization is structured around independent teams, aligned with the product, capable of reconfiguring their interactions without breaking everything. This is a key factor in the overall resilience of the company: an overly rigid organization will struggle to withstand major changes (market, technology), whereas an agile organization (in the true sense of the word) will cope better by reorganizing quickly.

Towards sustainable and responsible architecture

Designing an architecture that is "governable at scale" increasingly means taking environmental and ethical indicators into account, in addition to traditional technical or financial indicators.

Arthur Kuehn presented a simple three-step method for reducing the energy consumption of an existing application portfolio, even if it was not designed from the outset with Green IT principles in mind. The proposed approach, "Measure/Prioritize/Reduce," is intended to be "simple (achievable by three developers), Fast (less than six months), and Effective (with concrete, measurable results)." In practice, this involves first measuring the footprint of your applications (using tools to measure CPU consumption, queries, etc., possibly based on carbon cost estimators per use of cloud resources). Then, prioritize the applications or components to be optimized based on their impact and the cost/effort of correction. Finally, effectively reduce this impact through targeted optimizations (more efficient algorithms, infrastructure adapted to the load, cleaning up unnecessary data, etc.). This feedback showed that with a few motivated developers, tangible gains could be achieved on existing applications without disrupting the entire IT system. For example, by reducing the frequency of certain time-consuming tasks, X% of CPU is saved, which at the end of the year translates into kWh saved and lower cloud bills, while potentially improving perceived performance.

On the cloud infrastructure side, Elise Auvray from Scaleway shared the challenges encountered in measuring the environmental footprint of the cloud. Collecting reliable data, aggregating heterogeneous sources (energy consumption, hardware manufacturing emissions, data center water consumption, etc.), and choosing relevant life cycle assessment (LCA) methodologies proved to be no easy task. Her team developed an Environmental Footprint Calculator for their infrastructure, and one of the key messages was that measurement is a crucial prerequisite for improvement: you can only reduce what you can quantify. However, beware of missing or misleading data: the full impact of digital technology includes Scope 3 (equipment manufacturing, end of life), which is often poorly reported. Their experience therefore encourages CIOs to equip themselves with appropriate measurement tools and to contribute to improving the quality of environmental data.

Taking automation a step further, Guillaume Michalag presented CloudAssess, an open source solution developed with several partners, which aims to automate the entire Life Cycle Assessment (LCA) of cloud services. Rather than conducting these analyses manually (a slow and ad hoc procedure that is ill-suited to the dynamics of the cloud), CloudAssess offers an "LCA as Code" approach, where impact calculations are modeled and integrated directly into pipelines. They are based on standards (ISO 14000) and place particular emphasis on the quality of environmental data, using ResilioDB, for example, to reconstruct consistent data "from first principles" when supplier data is lacking. Although this remains a niche area, we are seeing the emergence of tomorrow's tools for CSR managers and cloud architects concerned with regulatory compliance (the European CSRD directive will require accurate non-financial reporting from 2025). The strategic message is that governance at scale will increasingly include these sustainability metrics: we will need to equip our platforms to track carbon footprints in the same way that we already track costs and performance.

Finally, beyond ecology, the ethical and responsibility dimension encompasses the human aspect of technology. On this point, Magali de Labareyre and Laurent Grangeau shed light on the use of AI in tech recruitment, examining the perfect match or the game of hide-and-seek with bias. While AI promises more efficient recruitment (rapid CV analysis, automated video interviews), it also carries the risk of reproducing or even amplifying discriminatory biases. For CIOs and HR departments, the challenge will be to reconcile the benefits of these new technologies with governance that guarantees fairness and transparency. Although peripheral to IT architectures, this topic reminds us that large-scale automation is not only a technical issue but also a societal one.

Strategic recommendations

This day of concrete feedback gives us an overview of best practices for building and developing standardized, automated, resilient, and scalable architectures. For decision-makers (CIOs, CTOs, CISOs), here are the main structural themes that emerged from WAX CONF 2025:

Industrialize and standardize your technological foundations: whether deploying infrastructure (Kubernetes clusters, cloud environments) or CI/CD pipelines, invest in Infrastructure as Code and declarative approaches (GitOps, operators) to manage your IT system as a coherent whole rather than a disparate collection. Initiatives such as the Placide platform at a major account demonstrate that a common software forge can support hundreds of projects while improving overall reliability. Such standardization will facilitate governance (uniform metrics, centralized compliance) and resilience (ability to replicate/rebuild quickly when needed).

- Focus on internal platforms and development cycle automation: competition to attract and retain IT talent also hinges on the quality of the tools provided internally. A well-designed Internal Developer Platform, or even targeted services such as cloud development environments, will empower your teams to be more efficient and consistent. This reduces the time to onboard new developers, standardizes deployment practices, and limits human error. As several case studies have shown, it is possible to rely on mature open source solutions (Backstage, ArgoCD, Terraform, etc.) to gradually build this platform layer rather than coding everything from scratch. The important thing is to start with the most pressing needs of developers (setup time, environment disparities) and provide an automated response.

- Adopt a DevSecOps and SRE culture on a large scale: security and reliability must be treated as top priorities from the design stage and throughout the entire lifecycle. This means integrating tools such as Dependency-Track into your pipelines to prioritize vulnerability handling, automate security testing, and continuously raise awareness among teams. Similarly, embrace SRE practices (SLO measurement, incident management training, automated and progressive deployments) not just on one or two pilot projects, but by making them systemic. As the Placide experience highlighted, spreading this culture across hundreds of teams is as much a human challenge as it is a technical one. This requires leadership (internal evangelists, trainers) and concrete feedback to demonstrate value (incident resolution time reduced thanks to SRE, security incidents avoided thanks to DevSecOps, etc.).

- Prepare for crises by building resilience: as the DDoS attack testimonials reminded us, an architecture is only resilient if it is prepared to face the worst-case scenarios. In practice, this means integrating robust solutions from the outset (WAF, CDN, automated recovery plans) and regularly testing these mechanisms (load exercises, attack simulations) to ensure they work when needed. Investing in resilience means avoiding colossal losses later on; a message that must also be understood at senior management level, as business continuity is at stake.

- Encourage continuous evolution rather than forced overhauls: feedback from successful overhauls teaches us that it is better to adopt a strategy of continuous improvement of the architecture than to wait for unmanageable debt to accumulate. In practice, allocate time in your roadmaps for regular technical projects, even small ones. Promote a modular architecture that allows components to be replaced without rewriting everything. And if a massive overhaul is inevitable, apply the principles discussed above (iteration, advanced tools, monitoring, etc.) to reduce the risks. The goal is to lessen the pain of change for both teams and end users by evolving the system in small increments.

- Integrate sustainability and ethical considerations into your choices: governance at scale now involves reporting on the environmental and social impact of IT systems. Start measuring a few indicators (application energy consumption, cloud carbon footprint, team diversity, etc.) and set improvement targets. Tools are beginning to emerge to automate this monitoring, and regulations are set to become stricter (EU green taxonomy, CSRD, etc.). Tomorrow's CIOs will need to be as knowledgeable about carbon efficiency as they are about SLAs. Similarly, remain vigilant about the ethical use of new technologies (such as AI) in your processes: they offer productivity gains (automated code review with AI, customized coding assistants) but raise governance issues (bias, confidentiality) that must be addressed with clear rules and transparency.

- Finally, pay as much attention to human organization as to technical architecture: as we have seen, there is no magic formula, but a few guiding principles do emerge. Decentralize decisions as much as possible to the teams themselves, give them responsibility for their products (product team mode), while providing a clear vision and constraints at the central level (security, compliance, business objectives). An organization that can quickly reorganize itself will be better able to take advantage of automation and evolve its architectures. Conversely, overly rigid structures may slow down or negate the gains that your new platforms/tools could bring. In short, modular architecture + agile organization = a winning combination for smooth scaling.

In conclusion, WAX CONF 2025 showed that the tech community is brimming with ingenious solutions to meet the challenges of scalability and resilience. Standardizing and automating does not mean dehumanizing or stifling creativity. On the contrary, it frees up time and energy to innovate where humans have the most added value, leaving machines and code to do the rest. It is up to CIOs and CISOs to take this feedback on board, adapt it to their context, and take the structural decisions (tools, organization, culture) today that will prepare their IT systems for the challenges of tomorrow.

To sum up: "Automate everything that can be automated, standardize everything that needs to be standardized, and you will be ready to evolve with complete governance."

Lionel GAIROARD

Practice Leader DevSecOps